RAO: A New Era of Optimization Replacing SEO

Introduction

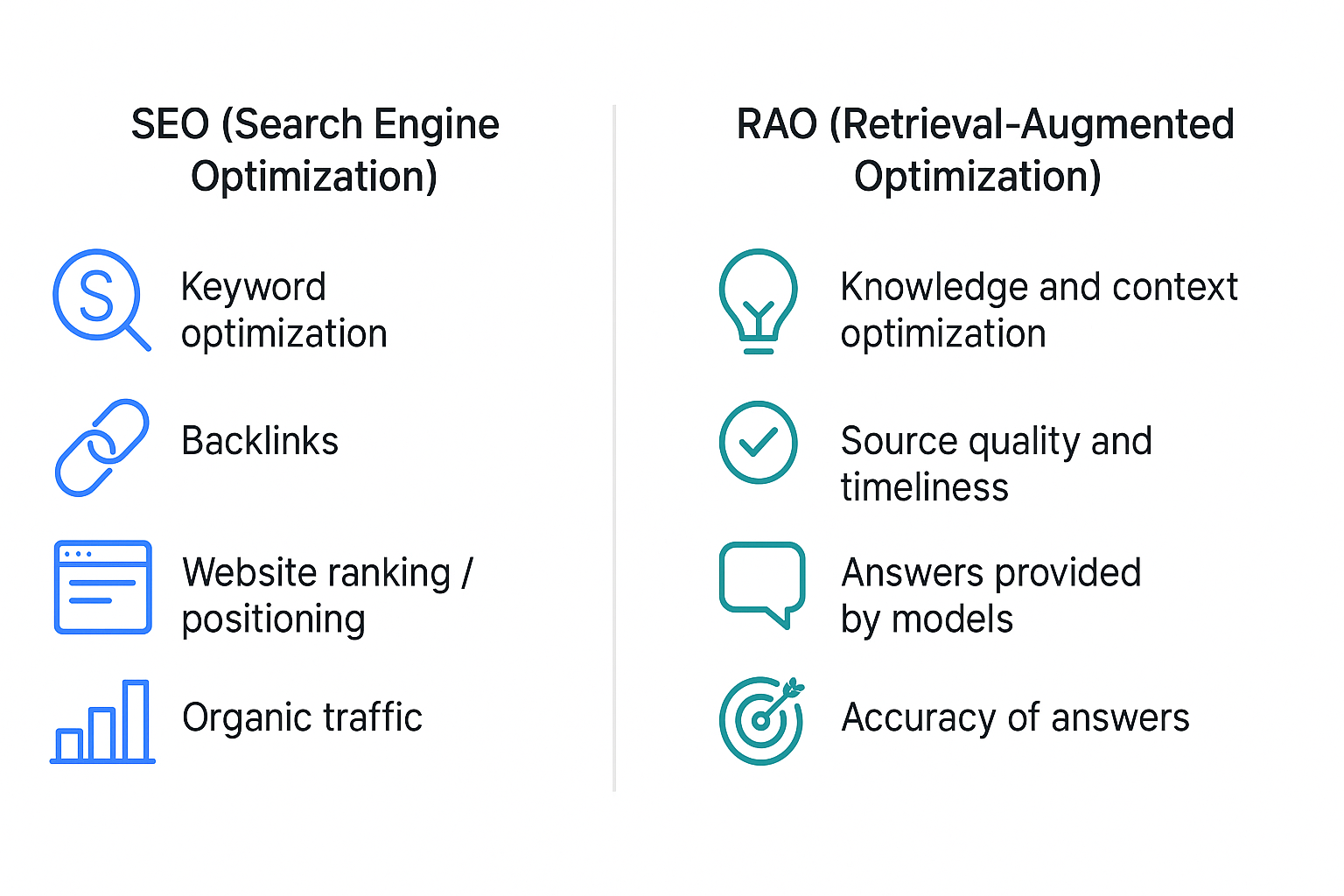

Retrieval‑Augmented Optimization (RAO) is a new way of thinking about online visibility — simple, effective, and tailored to AI-based search engines. Thesis: RAO can replace classic SEO because it understands context and user needs better than traditional optimization techniques.

In the simplest terms, RAO means that AI systems combine intelligent document retrieval (retrieval) with content generation and evaluation to deliver the most relevant answer to the user. Unlike classic SEO, which focuses on keywords, links, and site structure, RAO assesses the value of information in real time and prefers content that best matches the query.

The issue is that algorithms and AI operate differently: algorithms execute programmed rules, while AI learns and adapts — which gives RAO an advantage but requires constant oversight and quality data. Additionally, automation in social media and marketing shows how AI can scale personalization and reporting, yet it needs human supervision to avoid errors and biases.

In the following parts of the article I will present practical tips and examples of RAO implementations (from content audits to publication automation) and show how Lumi Zone helps companies transition to RAO step by step. Read on to see a practical action plan — the following sections refer to materials cited by the author.

3. How RAO Works — the mechanics of Retrieval‑Augmented Optimization and what it means for your site

Retrieval‑Augmented Optimization (RAO) is an approach that combines classical information retrieval with generative language models. In practice this means: instead of relying solely on static SEO signals (keywords, links), the system first retrieves relevant content snippets, and then generates a condensed, tailored response. This retriever + reader combination changes the way content is evaluated and served.

Technical elements of RAO — explained simply

- Retriever — the module that quickly finds documents or fragments matching the query. Think of it as an intelligent filter: it doesn't return the entire database, only the most promising pieces.

- Index (vector index) — the way content is stored as vectors, enabling fast comparisons. Instead of searching each document word by word, we compare vectors.

- Embeddings — representations of text as numerical vectors that "capture" the meaning of sentences and documents. It's thanks to embeddings that the retriever understands that "buying a car" and "purchase of a car" are similar intents.

- Reranker — the ordering layer. After initial filtering the retriever can return hundreds of passages; the reranker evaluates them more precisely and sorts them with the best first.

- Generative reader — a language model that combines retrieved passages and generates a coherent answer. It formulates the text, summarizes or compares information, often including references to sources.

- Wektorowe bazy danych — specialized engines (np. Pinecone, Milvus, czy open-source rozwiązania), które przechowują embeddings i umożliwiają bardzo szybkie wyszukiwanie semantyczne.

The role of source quality, freshness, and prompting

RAO heavily depends on the quality and freshness of indexed content. If the corpus contains outdated or incorrect materials, the generated answer will be equally unreliable — hence the need for validation and audits (this brings us back to the difference between algorithms and AI: algorithms execute rules, AI learns from data — so good data are important). Prompting (how and what you provide the model as context) influences the style, scope, and accuracy of the response — a well-formulated prompt can increase precision and reduce the fabrication of facts.

RAO vs traditional SEO — comparison of ranking signals

- Semantics: SEO relies largely on keyword matching; RAO uses embeddings, so meaning matters, not just the occurrence of a phrase.

- Freshness: Traditional SEO rewards durable assets (links, authority). RAO additionally favors fresh, up-to-date sources because generative models need the latest data.

- Answer coherence: RAO assesses whether the answer is complete, unambiguous, and useful — not just whether a page has a lot of content.

- Data source and authorship: In SEO, domain authority matters; in RAO the credibility of the sources used to generate the answer and the ability to reference them (traceability) also count.

Examples of queries where RAO wins

- Conversational queries: "How to optimize customer onboarding in SaaS with a limited team?" — RAO aggregates best practices from various articles and creates a concrete plan.

- Business decisions: "Compare the costs and ROI of an email campaign vs social media for a small e-commerce business" — RAO gathers current case studies and computes the proposed metrics.

- Aggregation of current data: "What are the latest changes in tax regulations for freelancers in 2025?" — RAO can combine fresh sources and indicate the dates of changes.

Reliability metrics — what to measure in RAO

- Factuality of answers: the percentage of generated statements that can be verified in sources.

- Traceability (source tracking): does the system provide the related sources and passages it used.

- Time‑to‑answer: the speed of generating a reliable answer — crucial in customer support and business dashboards.

- Precision/Recall retrievera: how well the retriever finds relevant passages without excess “noise”.

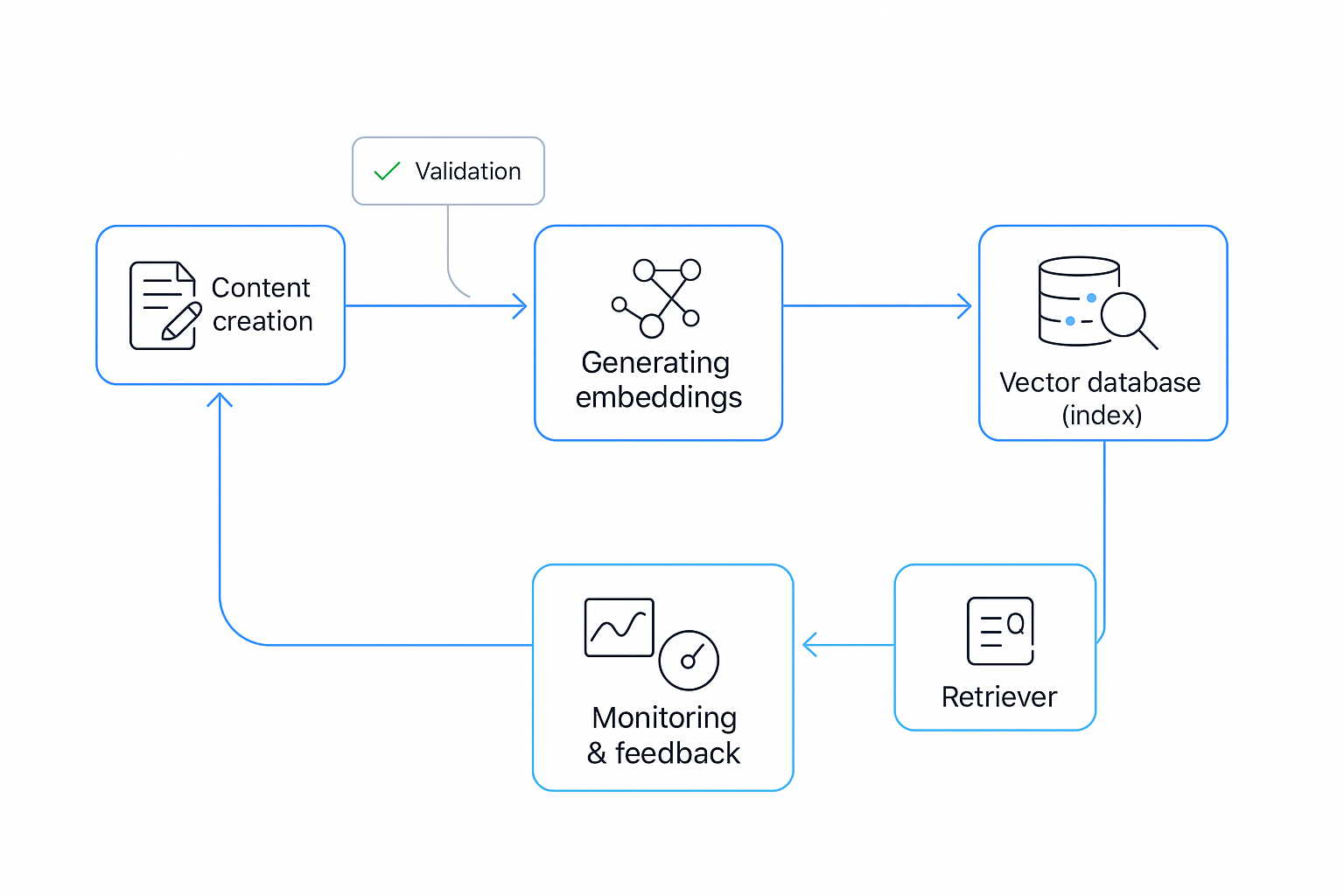

A short practical illustration — sample RAO workflow

- Publishing content (article, documentation, report).

- Indexing in a vector database — creating embeddings and updating the index.

- The retriever finds the most relevant passages relative to the user’s query.

- The reranker orders the results, selecting the best passages.

- The generative reader combines passages and creates a coherent answer, including references to sources.

RAO is not just technology — it’s a different way of thinking about online visibility. In practice it means better alignment of content with user intent and the ability to quickly aggregate up‑to‑date information. If you want to explore the technical and business implications, we recommend further reading: dlaczego AI popełnia błędy and articles about AI in social media here and here.

At Lumi Zone we help design and automate this workflow — from building the index, through update schedules, to optimizing prompts and reliability metrics. After this explanation, in the next section we will show practical steps for implementing RAO with automation: how to build a pipeline, which low‑code/no‑code tools are worth using, and how to monitor answer quality.

Practical guide: how to move from SEO to RAO — concrete steps and technical checklists

The shift from traditional SEO to RAO (Response‑Aware Optimization) is not just a change in content strategy, it’s a transformation of the process: collecting sources, formatting, versioning and automatically sharing knowledge with models that answer users. Below you’ll find a clear action plan, technical checklists and example workflows that Lumi Zone implements for clients using low‑code/no‑code (including n8n).

1. Content priorities — what you need to collect and organize

- FAQ and short answers: the most frequently asked questions, prepared as short, concrete answers (20–150 words).

- Current sources: product documentation, changelogs, policies, official articles — with dates and versions.

- Technical documentation: step-by-step instructions, code examples, API endpoints.

- Contextual metadata: author, publication date, product version, topical tags.

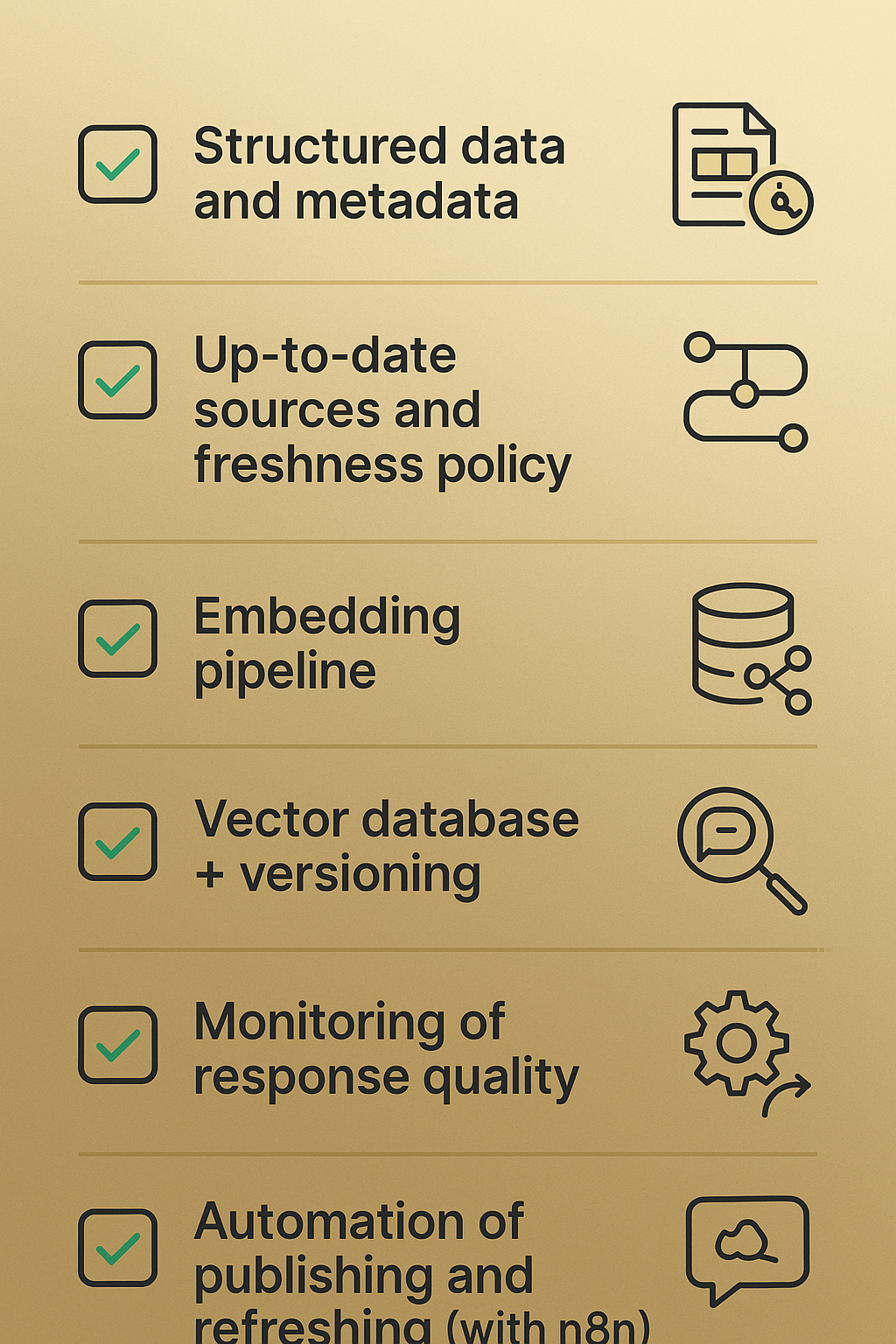

2. Technical elements to implement — checklist

- Data structuring: store content in a standardized format (JSON/Markdown with front‑matters containing metadata).

- Schema and unified metadata: define fields such as source_id, version, last_updated, intent, confidence_threshold.

- Update feeds: webhooks or RSS/JSON feeds for detecting changes in sources.

- Generating and automatically refreshing embeddings: configure a pipeline that generates new vectors on publish/update.

- Choosing a vector DB: prefer hosted solutions (Pinecone, Weaviate Cloud, Milvus Cloud) for SLA and scalability.

- Source versioning: tagging versions and retraining/retokenizing embeddings for major changes.

- Security and access: access control for the vector DB and query logs, encryption of data in transit and at rest.

3. Example tool stack and automatable workflows

- Orchestration: n8n as the main pipeline orchestrator (low‑code), for connecting CMS, repositories, embeddings and vector DB.

- Embedding tools: OpenAI embeddings, Cohere, Hugging Face Inference API — depending on cost and quality for your language.

- Hosted vector DBs: Pinecone / Weaviate Cloud / Milvus Cloud — fast semantic search and scalability.

- Response quality monitoring: a set of metrics (semantic similarity, human feedback rate, hit/miss ratio) + Slack/Email alerts.

Example fully automatable workflows (n8n):

- Publication of an article in CMS → webhook to n8n → content and metadata extraction → generate embeddings → push to vector DB → test query (synthetic queries) → status OK/alert.

- Changed FAQ in repo → n8n fetches diff → rebuild embeddings only for changed records → update in vector DB → moderation notification for approval.

- Daily scan of sources (documentation, changelog) → change detection → batch embeddings + versioning → automatic fallbacks to the last stable version on error.

4. Monitoring, tests and response quality

- Automated tests: a set of control queries after each update — check top‑k retrieved, similarity score, and compliance with the source version.

- Human‑in‑loop: random checking of responses by editors; feedback returns to the pipeline as training or correction.

- Business metrics: customer response time, response SLA, percentage of successful autosuggestions — monitored on the dashboard.

5. Business examples and effects — what you can expect

- Reduction of manual editorial work: automating embedding generation and push to DB can reduce manual updating by 60–80%.

- Faster content updates: time from publication to availability in the answer system drops from days to minutes (typically <30 min with a well-configured pipeline).

- Better conversion: more accurate, short answers and up-to-date info increase user satisfaction — often a conversion increase of 10–25% in customer service channels.

Lumi Zone specializes in low-code/no-code (n8n) implementations, publication automation and monitoring. We'll build a pipeline for you that will relieve the team, ensure source versioning and continuous refreshing of knowledge in models.

Mini‑case

Company X (SaaS): we automated the FAQ feed + embeddings pipeline (n8n → OpenAI embeddings → Pinecone). Result: time to make an updated answer available shortened from 48 hours to 25 minutes, and manual editorial work decreased by 70%. Conversion in the support form increased by 14% in three months.

Summary and conclusions

RAO is a natural next step: instead of focusing only on site visibility (SEO), we move to optimizing knowledge delivery — accurate, fast and contextual responses to user needs. It's a paradigm shift that allows companies to save time, increase conversions and better utilize the knowledge accumulated in the organization.

At the same time, remember the limitations: AI supports decisions but does not replace responsible oversight. Research clearly shows that learning systems require regular audits, data validation and ethical controls to avoid repeating errors or biases.

As Lumi Zone we offer practical steps: a free RAO audit/readiness analysis and a quick pilot using n8n and vector DB, to show real benefits in 4–8 weeks. Our implementations combine low-code automation with an audit process so that the technology works safely and effectively.

Want to start? Arrange a free RAO audit — write to us at kontakt@lumizone.pl or via the form on the Lumi Zone website. Together we will plan the pilot and the schedule of ethical audits.

Further reading

- Why artificial intelligence makes mistakes – and what it means for business

- Artificial intelligence and social media (OOH Magazine)